Intelligent Design

Intelligent Design

Neuroscience & Mind

Neuroscience & Mind

Passing the Turing Test Is No Guarantee of True AI

As I noted earlier, at COSM 2023 futurist Ray Kurzweil predicted that by 2029 AI will pass the “Turing test,” effectively making it impossible for us to distinguish computer intelligence from that of a human being. As I explained,

The “Turing test,” was developed by Alan Turing, the famous British computer scientist and World War II codebreaker depicted by Benedict Cumberbatch in the Academy Award-winning movie, The Imitation Game. In 1950, Turing proposed that we could say that computers had effectively achieved humanlike intelligence when a human investigator could not distinguish the performance of a computer from that of a human being. The test has seen many variations and criticisms over the years, but it remains the gold standard for evaluating whether we have created true AI.

AT COSM ’23, FUTURIST RAY KURZWEIL PREACHES THE GOSPEL OF ARTIFICIAL INTELLIGENCE | MIND MATTERS

What exactly are these criticisms and why are they relevant?

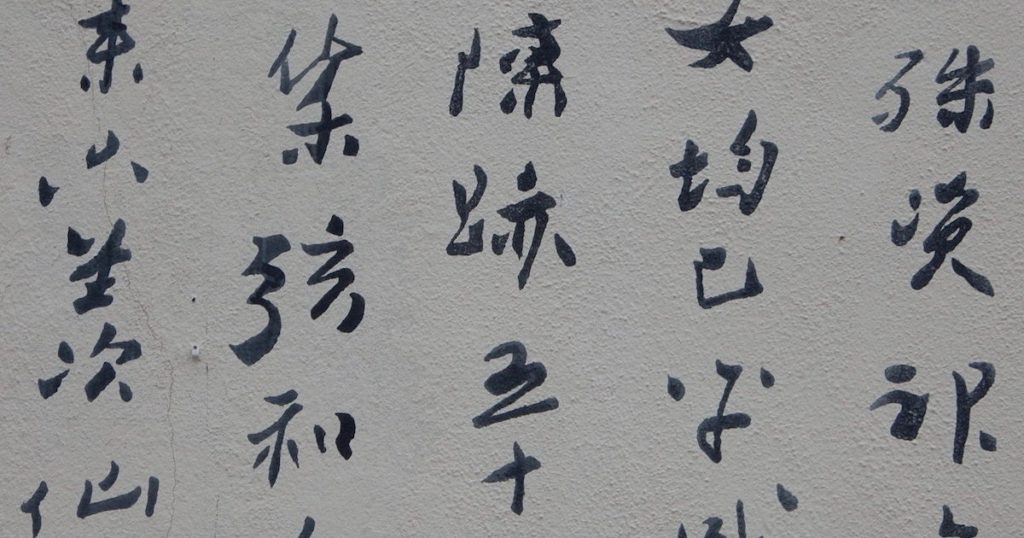

Perhaps the most famous critic of the Turing test has been philosopher John Searle, who proposed the “Chinese Room” as a thought experiment that shows why something could pass the “test” and yet be nothing like human intelligence. The Chinese Room came up during the Q&A sessions at COSM 2023 so it’s worth exploring further.

Exploring the Chinese Room

Under this hypothetical scenario, a person who speaks no Chinese whatsoever inhabits a room full of file cabinets full of questions and answers — all written in Chinese. Outside the room, users pass cards to the person inside the room asking some questions in Chinese. The person inside speaks no Chinese, but he can match the symbols on the card to symbols he finds in the file cabinet. When he finds a match, he passes the answer written on the proper card to the person outside the room.

The person outside the room receives the correct answer in Chinese and assumes there is someone inside the Chinese Room who speaks Chinese and understands the questions. But what’s really happening is that a person who understands no Chinese is matching up symbols on the question to find the card with the answer. Now the right answer was preprogrammed by someone who built the file cabinets and does understand Chinese — but the person in the Chinese Room who picked the card had nothing to do with that.

Computers Must Be Programmed

The relevance to the Turing test should be obvious: Computers might be programmed to read inputs and then use a large data library to match the input to some preprogrammed output. But the computer wouldn’t “understand” the question — it would give the right answer only because it was preprogrammed by human intelligence to match inputs to the right outputs. The Turing test might therefore validate the illusion of intelligence when no real intelligence or understanding is present.

Though Turing formulated his test in 1950, the same pitfalls apply to modern AI. AI might give us impressive answers to our questions. But that doesn’t mean it has a humanlike understanding of what is going on, much less true sentience or consciousness. Modern AI might be nothing more than an super-sophisticated Chinese Room, and the Turing test would never be able to tell the difference.

In light of these obstacles, I’d like to propose the Meta-Turing Test: A true test of AI will be able to distinguish between AI and human intelligence, even when the two seem indistinguishable to the average human being.