Neuroscience & Mind

Neuroscience & Mind

The Myth of “Deep Learning”

I’ve been reviewing philosopher and programmer Erik Larson’s The Myth of Artificial Intelligence. See my earlier posts, here, here, and here.

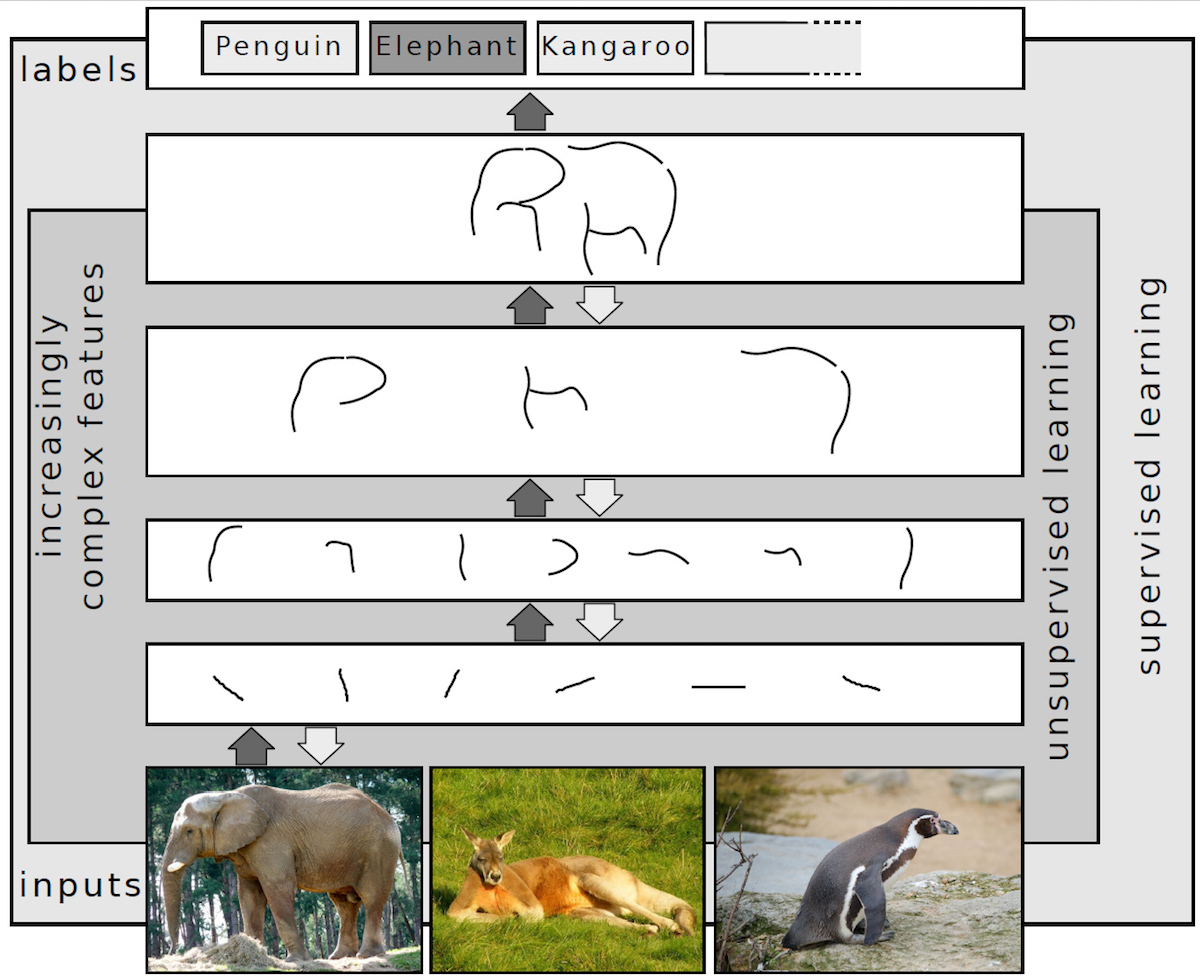

“Deep learning” is as misnamed a computational technique as exists. The actual technique refers to multi-layered neural networks, and, true enough, those multi-layers can do a lot of significant computational work. But the phrase “deep learning” suggests that the machine is doing something profound and beyond the capacity of humans. That’s far from the case. The Wikipedia article on deep learning is instructive in this regard. Consider the following image used there to illustrate deep learning:

Note the rendition of the elephant at the top and compare it with the image of the elephant as we experience it at the bottom. The image at the bottom is rich, textured, colorful, and even satisfying. What deep learning extracts, and what is rendered at the top, is paltry, simplistic, black-and-white, and unsatisfying. What’s at the top is what deep learning “understands” — in fact, its “understanding,” whatever we might mean by the term, cannot progress beyond what is rendered at the top level. This is pathetic, and this is what is supposed to lay waste and supersede human intelligence? Really now.